By Paul De Hert* and Andrés Chomczyk Penedo**

* Professor at Vrije Universiteit Brussel

(Belgium) and associate professor at Tilburg University (The Netherlands)

** PhD Researcher at the Law, Science,

Technology and Society Research Group, Vrije Universiteit Brussel (Belgium).

Marie Skłodowska-Curie fellow at the PROTECT ITN. The author has received

funding from the European Union’s Horizon 2020 research and innovation

programme under the Marie Skłodowska-Curie grant agreement No 813497

1. Dealing with online misinformation: who is in

charge?

Misinformation

and fake news are raising concerns for the digital age, as discussed by Irene

Khan, the United Nations Special Rapporteur on the promotion and protection of

the right to freedom of opinion and expression (see here). For example, during

the last two years, the COVID19 crisis caught the world by surprise and

considerable discussions about the best course of action to deal with the

pandemic were held. In this respect, different stakeholders spoke up but not

all of them were given the same possibilities to express their opinion. Online

platforms, but also traditional media, played a key role in managing this

debate, particularly using automated means (see here).

A climate of

polarization developed, in particular on the issue of vaccination but also

around other policies such as vaccination passports, self-tests, treatment of

the virus in general, or whether the health system should focus on ensuring

immunity through all available strategies (see here). Facebook, YouTube,

and LinkedIn, just to name a few, stepped in and started delaying or censoring

posts that in one way or another were perceived as harmful to governmental

strategies (see here). While

the whole COVID19 crisis deserves a separate discussion, it serves as an example

of how digital platforms are, de facto,

in charge of managing online freedom of expression and, from a practical point

of view, have the final say in what is permissible or not in an online

environment.

The term

'content’ has been paired with adjectives such as clearly illegal, illegal and

harmful, or legal but harmful, just to name the most relevant ones. However,

what does exactly each of these categories entail, and why are we discussing

these categories? What should be the legal response, if any, to a particular

piece of content and who should address it? While content and its moderation is

not a new phenomenon, as Irene Khan points in her previously mentioned report,

technological developments, such as the emergence and consolidation of

platforms, demand new responses.

With this

background, the European Union is currently discussing at a surprisingly, very

quick speed the legal framework for this issue through the Digital Services Act

(the DSA, previously summarised here).

The purpose of this contribution is to explore how misinformation and other

categories of questionable content are tackled in the DSA and to highlight the option

taken in the DSA to transfer government-like powers (of censorship) to the

private sector. A more democratic alternative is sketched. A first one is based

on the distinction between manifestly illegal content and merely illegal

content to distribute better the workload between private and public

enforcement of norms. A second alternative consists in community-based content

moderation as an alternative or complementary strategy next to platform-based

content moderation

2. What is the DSA?

The DSA (see here

for the full text of the proposal and here

for its current legislative status) is one of the core proposals in the

Commission’s 2019-2024 priorities, alongside the Digital Markets Act (discussed

here),

its regulatory ‘sibling’. It intends to refresh the rules provided for in the

eCommerce Directive and deal with certain platform economy-related issues under

a common European Union framework. It covers topics such as: intermediary

service providers liability - building up from the eCommerce Directive regime

and expanding it -, due diligence obligations for a transparent and safe online

environment -including notice and takedown mechanisms, internal

complaint-handling systems, traders traceability, and advertising practices-, risk

management obligations for very large online platforms and the distribution of

duties between the European Commission and the Member States. Many of the these

topics might demand further regulatory efforts beyond the scope of the DSA,

such as political advertisement which would be complemented by sector-specific

rules as, for example, the proposal for a Regulation on the Transparency and

Targeting of Political Advertising (see here).

As of late

November 2021, the Council has adopted a general approach to the Commission’s

proposal (see here)

while the European Parliament is still dealing with the discussion of possible

amendments and changes to that text (see here).

Nevertheless, as with many other recent pieces of legislation (see here),

it is expected that its adoption is sooner rather than later in the upcoming

months.

3. Unpacking Mis/Disinformation (part1): illegal

content as defined by Member States

We started by discussing

misinformation and fake news. If we look at the DSA proposal, the term 'fake

news' is missing in all its sections. However, the concept of misinformation appears as disinformation in Recitals 63, 68, 69,

and 71. Nevertheless, both terms are nowhere to be found in the Articles of the

DSA proposal.

In literature,

the terms are used interchangeably or are distinguished, with disinformation defined as the

intentional and purposive spread of misleading information, and misinformation as ‘unintentional

behaviors that inadvertently mislead’ (see here).

But that distinction does not help in recognizing either mis- or disinformation,

from other categories of content.

Ó Fathaigh,

Helberger, and Appelman (see here)

have pointed that disinformation, in particular, is a complex concept to tackle

and that very few scholars have tried to unpack its meaning. Despite the

different policy and scholarly efforts, a single unified definition of mis- or

disinformation is still lacking, and the existing ones can be considered as too

vague and uncertain to be used as legal definitions. So, where shall we start

looking at these issues? A starting point, so we think, is the notion of

content moderation, which according to the DSA proposal, is defined as follows:

'content moderation' means the activities undertaken by providers of

intermediary services aimed at detecting, identifying, and addressing

illegal content or information incompatible with their terms and conditions,

provided by recipients of the service, including measures taken that affect the

availability, visibility, and accessibility of that illegal content or that

information, such as demotion, disabling of access to, or removal thereof, or

the recipients' ability to provide that information, such as the termination or

suspension of a recipient's account (we underline);

Under this

definition, content moderation is an activity that is delegated to providers of

intermediary services, particularly online platforms, and very large online

platforms. Turning to the object of the moderation, we can ask what is exactly

being moderated under the DSA? As mentioned above, moderated content is usually

associated with certain adjectives, particularly illegal and harmful. The DSA

proposal only defines illegal content:

‘illegal content’ means

any information, which, in itself or by its reference to an activity, including

the sale of products or provision of services is not in compliance with Union

law or the law of a Member State, irrespective of the precise subject matter or

nature of that law;

So far, this

definition should not provide much of a challenge. If the law considers something

as, it makes sense that it is similarly addressed in the online environment as

in the physical realm. For example, a pair of fake sneakers constitute a

trademark infringement, regardless of if the pair is being sold via eBay or by

a street vendor in Madrid’s Puerta del Sol. In legal practice, regulating

illegal content is not black and white. A distinction can be made between clearly illegal content and situations where further exploration must be conducted to determine the illegality of

certain content. This is how it is framed in the German NetzDG, for example.

In some of the DSA proposal articles, mainly Art. 20, we can see the

distinction between manifestly illegal content and illegal content. However,

this distinction is not picked up again in the rest of the DSA proposal.

What stands is that

the DSA proposal does not expressly cover disinformation but concentrates on

the notion of illegal content. If Member State law defines and prohibit mis- or

disinformation -which Ó Fathaigh, Helberger and Appelman have reviewed and

found to be inconsistent across the EU- , then this would fall under the DSA

category of illegal content. Rather than creating legal certainty, this further

reinforces legal uncertainty and pegs the notion of illegal content to be

dependent on each Member State's provisions. But where does this leave disinformation

that is not regulated in in Member State laws? The DSA does not like it, but

its regulation is quasi hidden.

4. Unpacking Mis/Disinformation (part2): harmful

content non defined by the DSA

The foregoing

brings us to the other main concept dealing with content in the DSA, viz. harmful

content. To say that this is a (second) 'main' concept might confuse the

reader, since the DSA does not define it or regulate it at great lengths. The DSA’s explanatory memorandum states that `[t]here is a general agreement among

stakeholders that ‘harmful’ (yet not, or at least not necessarily, illegal)

content should not be defined in the Digital Services Act and should not be

subject to removal obligations, as this is a delicate area with severe

implications for the protection of freedom of expression’.

As such, how can

we define harmful content? This

question is not new by any means as we can trace back policy documents from the

European Union dating back to 1996 (see here)

dealing with this problem. Since then, little has changed in the debate

surrounding harmful content as the core idea remains untouched: harmful content

refers to something that, depending on the context, could affect somebody due

to it being unethical or controversial (see here).

In this respect,

the discussion on this kind of content does not tackle a legal problem but

rather an ethical, political, or religious one. As such, it is a valid question

to be asked if laws and regulations should even mingle in this scenario. In

other words, does it make sense to talk about legal but harmful content when we discuss new regulations? Should

our understanding of illegal and harmful content be construed in the most generous

way to accommodate for the most amount of situations possible to avoid this

issue? And more importantly, if the content seems to be legal, does it make

sense to add the adjective of ‘harmful’ rather than using, for example,

‘controversial’? Regardless of the terminology used, this situation leaves us

with three types of content categories: (i) manifestly illegal content; (ii)

illegal, both harmful and not, content; (iii) legal but harmful content. Each

of them demands a different approach, which shall be the topic of our following

sections.

5. Illegal content moderation mechanisms in the DSA

(content type 1 & 2)

The DSA puts

forward a clear, but complex, regime for dealing with all kinds of illegal

content. As a starting point, the DSA proposal provides for a general no monitoring regime for all

intermediary service providers (Art. 7) with particular conditions for mere

conduits (Art. 3), caching (Art. 4), and hosting service providers (Art. 5). However,

voluntary own-initiative investigations are

allowed and do not compromise this liability exemption regime (Art. 6). In any

case, once a judicial or administrative

order mandates the removal of content, this order has to be followed to

avoid incurring liability (Art. 8). In principle, public bodies (administrative

agencies and judges) have control over what is illegal and when something should

be taken down.

However, beyond

this general regime, there are certain stakeholder-specific obligations spread

out across the DSA proposal also dealing with illegal content that challenge the

foregoing state-controlled mechanism. In this respect, we can point out the mandatory

notice and takedown procedure for hosting providers with a fast lane for

trusted flaggers notices (Arts. 14 and 19, respectively), in addition to the

internal complaint-handling system for online platforms paired with the

out-of-court dispute settlement (Arts. 17 and 18, respectively) and, in the

case of very large online platforms, these duties should be adopted following a

risk assessment process (Art. 25). With these set of provisions, the DSA grants

a considerable margin to certain entities to act as law enforcers and judges,

without a government body having a say in if something was illegal and its

removal was a correct decision.

6. Legal but harmful content moderation mechanisms in

the DSA (content type 3)

But what about our

third type of content, legal but harmful content, and its moderation? Without

dealing with the issue of content moderation directly, the DSA transfers the

delimitation of this concept to providers of online intermediary services,

mainly online platforms. In other words, a private company can limit apparently

free speech within its boundaries. In this respect, the DSA proposal grants all

providers of intermediary services the possibility of further limiting what

content can be uploaded and how it shall be governed via the platform’s terms

and conditions and, by doing so, these digital services providers are granted

substantial power in regulating digital behavior as they see fit:

‘Article 12 Terms and conditions

1. Providers of intermediary services shall include information on any

restrictions that they impose concerning the use of their service in

respect of information provided by the recipients of the service, in their

terms and conditions. That information shall include information on any

policies, procedures, measures, and tools used for content moderation,

including algorithmic decision-making and human review. It shall be set out in

clear and unambiguous language and shall be publicly available in an easily

accessible format.

2. Providers of intermediary services shall act in a diligent,

objective, and proportionate manner in applying and enforcing the restrictions

referred to in paragraph 1, with due regard to the rights and legitimate

interests of all parties involved, including the applicable fundamental rights

of the recipients of the service as enshrined in the Charter.’

In this respect,

the DSA consolidates a content moderation model heavily based around providers

of intermediary services, and in particular, very large online platforms, acting

as lawmakers, law enforcers, and judges at the same time. They are lawmakers as

the terms and conditions lay down what is permitted as well as forbidden in the

platform. While there isn't a general obligation to patrol the platform, they must

react to notices from users and trusted flaggers and enforce the terms if

necessary. And, finally, they act as judges by attending to the replies from

the user who uploaded illegal content and dealing with the parties involved in

the dispute, notwithstanding the alternative means provided for in the DSA.

Rather than

using the distinction between manifestly illegal content and ordinary illegal

content and refraining from regulating other types of content, the DSA creates

a governance model for moderation of all content in the same manner. While

administrative agencies and judges can request content to be taken down, under

Art. 8, the development of the further obligations mentioned above poses the

following question: who is the main responsible to define what is illegal and

what is legal? Are the existing institutions subject to checks and balances or

rather private parties, particularly BigTech and very large online platforms?

7. The privatization of content moderation: the second

(convenient?) invisible handshake between the States and platforms

As seen with

many other areas of the law, policymakers and regulators have slowly but

steadily transferred government-like responsibilities into the private sector

and mandated their compliance relying on a risk-based approach. For example, in

the case of financial services, banks, and other financial services providers

have turned into the long arm of financial regulators to tackle money

laundering and tax evasion rather than relying on government resources to do

this. This resulted in financial services firms having to process vast amounts

of personal data to determine whether a transaction is illegal (either because

it is laundering criminal proceedings or avoiding taxes) with nothing but their

planning and some general guidelines; if they fail in this endeavor

administrative fines (and in some cases, criminal sanctions) can be expected. The

result has been an ineffective system to tackle this problem (see here)

yet regulators keep on insisting on this approach.

A little shy of

20 years ago, Birnhack and Elkin denounced the existence of an invisible

handshake between States and platforms for the protection and sake of national

security after the 9/11 terror attacks (see here).

At that time, this invisible handshake could be considered by some as necessary

to deal with an international security crisis. Are we in the same situation as

we speak when it comes to dealing with disinformation and fake news? This is a

valid question. The EU policy makers seems to be impressed by voices such as

Facebook’s whistleblower Frances Haugen who wants to align 'technology and

democracy' by enabling platforms to moderate post. The underlying assumption

seems to be that platforms are in the best position to moderate content

following supposedly clear rules and that 'disinformation' can be identified (see

here).

Content

moderation presents a challenge for States given the amount of content

generated non-stop across different intermediary services, in particular,

social media online platforms (see here).

Facebook employs a sizable staff of almost 15,000 individuals as content

moderators (see here)

but also relies heavily on automated content moderation, authorized by the DSA proposal

under Arts. 14 and 17, in particular, to mitigate mental health problems to

those human moderators given the inhuman content they sometimes have to engage

with. To put this in comparison, using the latest available numbers from the Council

of Europe about the composition of judiciary systems in Europe (see here),

the Belgian judiciary employs approximately 9200 individuals (-the entire

judiciary dealing with issues about commercial law up to criminal cases-), a

little more than half of Facebook’s content moderators.

As such, one can

argue that courts could be easily overloaded with cases that demand a quick and

agile solution for defining what is illegal or harmful content if platforms

didn't act as a first-stage filter for content moderation. Governments would

need to heavily invest in administrative or judicial infrastructure and human

resources to deal with such demand from online users. This matter has been discussed

by scholars (see here).

The available options they see either (i) strengthening platform content

moderation by requiring the adoption of judiciary-like governance schemes, such

as social media councils as Facebook has done; or (ii) implementing e-courts

with adequate resources and procedures suited to the needs of the digital age

to upscale our existing judiciary.

8. The consequences of the second invisible handshake

The DSA seems to

have, willingly or not, decided on the first approach. Via this approach, -the

privatization of content moderation-, States do not have to deal with the lack

of judicial infrastructure to deal with the amount of content moderation that

digital society requires. As shown by our example, Facebook has an

infrastructure, just on raw manpower available, that doubles that of a

country’s judiciary, such as Belgium. This second invisible handshake between

BigTech and States can be situated in the incapacity of States to deal with disinformation

effectively with the current legal framework and institutions.

If the DSA

proposal is adopted ‘as is’, then platforms would have a significant power over

individuals. First, through the terms and conditions, they would in position to

determine what is allowed to be said and what cannot be discussed, as provided

for by Art. 12. Not only that but also any redress before decisions adopted by

platforms would have to be first channeled through the internal complaint

handling mechanisms, as provided for by Arts. 17 and 18, for example, rather

than seeking judicial remedy. As it can be appreciated, the power scale has

clearly shifted towards platforms, and by extension to governments, in

detriment of end-users.

Besides this,

the transfer of government-like powers to platforms contributes to avoiding

making complicated and hard decisions that could cost political reputation.

Returning to our opening example, the lack of a concrete decision from our

governments regarding sensitive topics has left platforms in charge of choosing

what is the best course of action to tackle a worldwide pandemic by defining

when something is misinformation that can affect the public health and when something

could help fight back something that is out of control. Not only that but if

platforms wrongfully approach the issue, then they are exposed to fines for

non-compliance with their obligations, although particularly very large online

platforms can deal with the fines proposed under the DSA.

If the second

invisible handshake is going to take place, the least we, as a society, deserve

is that agreement is made transparent so that public scrutiny can oversight

such practices and free speech can be safeguarded. In this respect, the DSA

could have addressed the issue of misinformation and fake news in a more

democratic manner. Two proposals:

9. Addressing disinformation more democratically to

align 'technology and democracy'

Firstly, the distinction

between manifestly illegal content and merely illegal content could have been

extremely helpful in distributing the workload between the private and public

sector in a manner that administrative authorities and judges would only take

care of cases where authoritative legal interpretation is necessary. As such,

manifestly illegal content, such as apology to crime or intellectual property

infringements, could be handled directly by platforms and merely illegal

content by courts or administrative agencies. In this respect, a clear

modernization in legal procedures to deal with claims about merely illegal

content would still be necessary to adjust the legal response time to the speed

of our digital society. Content moderation is not alone in this respect but

joins the ranks of other mass-related issues, such as consumer protection,

where effective legal protection is missing due to the lack of adequate

infrastructure to channel complaints.

Secondly, as for legal but

harmful content, while providers of online intermediary services have a right

to conduct their business as to how they see fit and therefore can select which

content is allowed or not via terms and conditions, citizens do have a valid

right to engage directly in the discussion of those topics and determine how to

proceed with them. This is even more important as users themselves are the ones

interacting on these platforms and that content is exploited by platforms to

ensure that controversy remains on the table to ensure engagement (see here).

However, there

is a possibility to deal with content moderation, particularly in the case of

legal but harmful content, that avoids a second invisible handshake: community-based

content moderation strategies (see here)

where users have a more active role in the management of online content has

proven to be successful in certain online platforms. While categories such as clearly illegal or illegal and harmful

content do not provide much margin for societal interpretation, legal but harmful content could be

tackled by citizens' involvement. In this respect, community-based approaches,

while resource-intensive, allow for citizens to engage directly in the debate about

the issue at hand.

While

community-based content moderation also has its own risks, it could serve as a

more democratic method than relying on platforms’ unilateral decisions and it

might serve where judges and administrative agencies cannot go due to the legality

of content. As noted by the Office of the United Nations High Commissioner for

Human Rights, people, rather than technology, should be making the hard

decisions but also States, as elective representatives of society, need to make

decisions about what is illegal and what is legal (see here).

Our alternatives

are only a part of a more complete program. Further work is needed at policy

level to address fake news. Sadly, as it may be, the matter is not matured yet

and ripe for regulation. While the phenomena of political actors actively

spreading misleading information (the twittering lies told by political

leaders) are well-known and discussed, the role of traditional news media, who

are supposed to be the bearers of truth and factual accuracy, is less well

understood. Traditional news media are in fact a part of the problem, and play

a somewhat paradoxical role with respect to fake news and its dissemination.

People learn about fake news, not via obscure accounts that Facebook and others

can control, but through regular media that find it important for many reasons

to report on disinformation. Tsfatie and others (see here)

rightly ask for more analysis and collaborations between academics and

journalists to develop better practices in this area.

We are also surprised

by the lack of attention in the DSA proposal to the

algorithmic and technological dimension that seems central to the issue of

fake news. More work is needed on the consequences of algorithmic production of

online content. More work too is needed to assess the performance of

technological answers to technology. How

to organize a space of contestation in a digitally mediated and enforced world?

Are the redress mechanisms in the DSA sufficient when the post has already been

deleted, i.e. "delete first rectify after"?

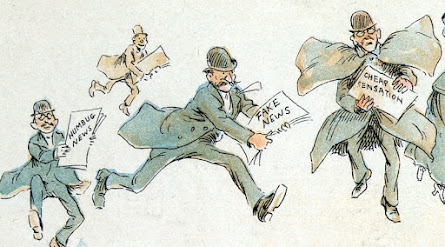

Art credit: Frederick

Burr Opper, via wikimedia

commons

This comment has been removed by a blog administrator.

ReplyDeleteThis comment has been removed by a blog administrator.

ReplyDeleteThis comment has been removed by a blog administrator.

ReplyDeleteThis comment has been removed by a blog administrator.

ReplyDelete