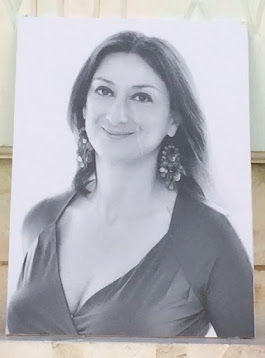

Samira Asmaa Allioui,

research and tutorial fellow at the Centre d'études internationales et

européennes de l'Université de Strasbourg

Photo credit: Bin im Garten, via Wikimedia

Commons

Journalists are under pressure in

different ways. Throughout the last few years, media freedom and especially

media pluralism are in peril.

On December 15, 2023, the

European Council and the European Parliament struck a deal on rules to

safeguard media freedom, media pluralism and editorial independence in the

European Union. The EU

Media Freedom Act (EMFA) promised increased transparency about media

ownership and safeguards against government surveillance and the use of spyware

against journalists. The agreement comes after numerous revisions of the Audiovisual

Media Services Directive (AMSD) and new regulations such as the Digital

Market Act (DMA) and Digital

Services Act (DSA). As a reminder, the EMFA builds on the DSA.

The aim of this contribution is

to present an overview of the EMFA and specifically to analyse to what extent its

rules still contribute to the limitation of freedom of speech, the erosion of

trust, the breach of democratic processes, disinformation, and legal

uncertainty.

The EMFA requires EU countries to

respect editorial freedom, no spyware, no political interference, stable

funding for public media, protection of online media and transparent state

advertising. It established a European

watchdog: a new independent European

Board for Media Services to fight interference from inside and outside the

EU.

Nevertheless, this new EU

legislation tries to set boundaries for the journalists’ actions through Article

18 EMFA on the protection of media content on very large online platforms

(VLOPs), and the potential detrimental effects of introducing something akin to

a media exemption. But the most significant ambiguity is addressed by Article

2 of the EMFA on the definition of ‘media service’ which appears to be the problem

everyone acknowledges. This raises the question of who the EMFA is protecting. Are

democracy and the possibility for people to get impartial and unbiased

information really strengthened? Not forgetting that for the European

Parliament elections, there is a potential danger of political interference

by extra-European countries that will try to take advantage of democratic

elections to influence

the media illegally, by creating fake social media accounts and by

launching a massive propaganda campaign to disseminate conflict-ridden content.

THE ACCURACY OF INFORMATION

The EMFA focuses on two main points

regarding VLOPs. First, it asserts that platforms limit users’ access to

reliable content when they apply their terms and conditions to media companies

that practice editorial responsibility and create news conforming with

journalistic standards. First, the Regulation takes aim at VLOPs’ gatekeeping

power over access to media content. To do so, the EMFA aims to remould the

relationship between media and platforms. Media service providers that exercise

editorial responsibility for their content have a primary role in the

dissemination of information and in the exercise of freedom of information

online. In exercising this editorial responsibility, they are expected to

intervene diligently and provide reliable information that complies with

fundamental rights, in accordance with the regulatory or self-regulatory

requirements to which they are subject in the Member States.

Secondly, it asserts that the quality

of the media may fight against

disinformation. To consider this problem, the EMFA’s objective is to adjust

the connection between platforms and media. According to Article 2 EMFA, ‘media

service’ means ‘a service as defined by Articles 56 and 57 [TFEU], where the

principal purpose of the service or a dissociable section thereof consists in

providing programmes or press publications, to the general public, under the

editorial responsibility of a media service provider, by any means, in order to

inform, entertain or educate’ A ‘media

service’ has

some protections under the Act. According to Joan Barrata, the media

definition under EMFA is an overly “limited” definition, which is not “aligned”

with international and European human rights standards, and “discriminatory”,

as it excludes “certain forms of media and journalistic activity”. The DSA classifies

platforms or search engines that have more than 45 million users per month

in the EU as VLOPs or Very Large Online Search Engines (VLOSEs). As an

illustration, according to Article 18 EMFA, media service providers will be afforded

special transparency and contestation rights on platforms. In addition to that,

according to Article

19 EMFA, media service providers will have the opportunity to engage in a

constructed dialogue with platforms on concepts such as disinformation. Under

the agreement, VLOPs will have to inform media service providers that they plan

to remove or restrict their content and give them 24 hours to answer (except in

the event of a crisis as defined in the DSA).

Article 18 of the EMFA enforces a

24-hour content moderation exemption for media, effectively making platforms

host content by force. By making platforms host content by force, this rule

prevents large online platforms from deleting media content that violates

community guidelines. Nevertheless, not only it could threaten marginalised

groups, but it could also undermine equality of speech and fuel disinformation.

This is a vicious

circle between the speaker planting false information on social media, the

media platform spreading the false speech thanks to amplifying algorithms or

human-simulating bots, and the recipients who view the claims and spread them.

According to the EMFA provides

that, before signing up to a social media platform, platforms must create a

“special/privileged communication channel” to consider content restrictions

with “media service providers”, defined as “a natural or legal person whose

professional activity is to provide a media service and who has editorial

responsibility for the choice of the content of the media service and

determines the manner in which it is organised “. In other words, instead of being

forced to host any content, online platforms should provide special privileged

treatment to certain media outlets.

However, not only does this

strategy impede platforms’ autonomy in enforcing their terms of use (nudity,

disinformation, self-harm) but it also imperils the protection of marginalised

groups who are frequently

the main targets of disinformation and hate speech. Politics remains

fertile ground for hate speech as well as disinformation. Online platforms and

social media have played a key role in amplifying the spread of hate speech and

disinformation. As proof, recent reports reveal the widespread abuse of these

platforms by political parties and governments. Indeed, it turns out that more

than 80 countries around the world have engaged in political disinformation

campaigns.

This could also permit misleading information

to remain online which allows sufficient time to see the information

transmitted and disseminated, hindering one of the key objectives of EMFA - to

give more reliable sources of information to citizens.

ABUSIVE REGULATORY

INTERVENTION AND DETERIORATION OF TRUST

Primarily, one can only be concerned

about any regulatory intervention by governments on issues such as freedom

of expression or media freedom. Through their EU Treaty competencies in

security and defence matters, EU Member States seem to be winning because their

options to spy on reporters have been reaffirmed. However, according to the

final text (April 11, 2024), the European Parliament added important guarantees

to allow the use of spyware, which will only be possible on a case-by-case

basis and subject to authorization from an investigating judicial authority as

regards serious offenses punishable by a sufficiently long custodial sentence.

Furthermore, it must be

emphasized that even in these cases the subjects will have the right to be

informed after the surveillance and will be able to challenge it in court. It

is also specified that the use of spyware against the media, journalists and

their families is prohibited. In the same vein, the rules specify that

journalists should not be prosecuted for having protected the confidentiality

of their sources.

The law restricts possible

exceptions to this for national security reasons which fall within the

competence of member states or in cases of investigations into a closed list of

crimes, such as murder, child abuse or terrorism. Only in such situations or

cases of neglect, the law makes it very clear that this must be duly justified,

on a case-by-case basis, in accordance with the Charter of Fundamental Rights,

in circumstances where no other investigative tool would be adequate.

In this regard, the law therefore

allows for new concrete guarantees at EU level in this regard. Any journalist

concerned would have the right to seek effective judicial protection from an

independent court in the Member State concerned. In addition to that, each

Member State will have to designate an independent authority responsible for

handling complaints from journalists concerning the use of spyware against

them. These independent authorities provide, within three months of the

request, an opinion on compliance with the provisions of the law on media

freedom.

Some governments in Europe have

tried to interfere in the work of journalists recently which is a blatant

demonstration of how far politicians can go against media using national

security as an excuse. To avoid an erosion of trust, media service providers

must be totally transparent about their ownership structures. That is why, in

its final version (April 2024), the EMFA enhances transparency of media

ownership, responding to rising concerns in the EU about this issue. The EMFA broadens

the scope of the requirements of transparency, providing for rules guaranteeing

the transparency of media ownership and preventing conflicts of interest (Article

6) as well as the creation of a coordination mechanism between national

regulators in order to respond to propaganda from hostile countries outside the

EU (Article 17).

To do that, there is a need to deepen

safeguards to shield all media against economic capture by private owners to

avoid media capture. It can be worse when no official intervention can mean

non-transparent and selective support for pro-government media. As a matter of

fact, it demonstrates that a combination of political pressure and corruption

can be risky for the free press.

Secondly, the EMFA’s content

moderation provisions could ruin public trust in media and endanger the

integrity of information channels. Online platforms moderate illegal content

online. Moderation provisions include: a solution-orientated conversation

between the parties (VLOPs, the media and civil society) to avoid unjustified

content removals; obligatory annual reporting (reports on content moderation

which must include information about the moderation initiative, including

information relating to illegal content, complaints received under

complaints-handling systems, use of automated tools and training measures) by

very large online platforms (VLOPs); any complaint lodged under

complaints-handling systems by media service providers must be processed with

priority; and additional protection against the unjustified removal by VLOPs of

media content produced according to professional standards. These platforms

will need to take every precaution to communicate the reasons for suspending

content to media service providers before the suspension becomes effective. The

process consists of a series of safeguards to ensure that this rapid alert

procedure is consistent with the European Commissions’ priorities such as the

fight against disinformation. In this regard, the Electronic Frontier

Foundation states that « By

creating a special class of privileged self-declared media providers whose

content cannot be removed from big tech platforms, the law not only changes

company policies but risks harming users in the EU and beyond ».

MEDIA COMPANIES AND PLATFORMS BARGAINING

CONTENT

Yet the EMFA still does not deal

with the complex issue of who would oversee controlling the self-declarations (Article

18(1) EMFA). More precisely, according to Article 18 EMFA “Providers of [VLOPs]

shall provide a functionality allowing recipients of their services to declare”

that they are media service providers. This self-declaration can be done,

mainly, according to three criteria: if public service media providers fulfill

the definition of Article

2 EMFA; if public service media providers “declare that they are editorially

independent from Member States, political parties, third countries and entities

owned or controlled by third countries”; and if public service media providers

“declare that they are subject to regulatory requirements for the exercise of

editorial responsibility in one or more Member States” or adhere “to a

co-regulatory or self-regulatory mechanism governing editorial standards that

is widely recognised and accepted in the relevant media sector in one or more

Member States”. According to Article 18(4), when a VLOP decides to suspend its

services regarding the content provided by a self-declared media service

provider, “on the grounds that such content is incompatible with its terms and

conditions”, it must “communicate to the media service provider concerned a statement

of reasons” accompanying that decision “prior to such a decision to suspend or

restrict visibility taking effect”.

Aside from that, Article 18 EMFA splits the rules implemented by the Digital

Services Act (DSA), a horizontal instrument that aims to create and ensure

a more trustworthy online environment by putting in place a multilevel

framework of responsibilities targeted at different types of services and by

proposing a set of asymmetric obligations harmonized at EU level with the aim

of ensuring regulatory oversight of the EU transparency, online space and accountability.

Those rules covering all services and all types of illegal content, including

goods or services are set by the DSA. This implies that media regulators will

be enrolled in the cooperation mechanisms that will be set up for the aspects

falling under their mandate. The inception of a specific “structured

cooperation” mechanism is intended to contribute to strengthening robustness,

legal certainty, and predictability of cross-border regulatory cooperation.

This entails enhanced

coordination and more precisely collective deliberation between national

regulatory authorities (NRAs) which can bring significant added value to the

application of the EMFA. This implies that media regulators will be involved in

the cooperation mechanisms that will be set up for the aspects falling under

their remit, even if it is still unclear how this will look in practice.

Above all, how will the new

legislation be applied in practice and how will it work to ensure that it

neither undermines the equality of speech and democratic debate nor endangers

vulnerable groups? Excluding the fact that Article 18 of the EMFA incorporates

safeguards about AI-generated

content, details about which remain undisclosed as of now (see also Hajli

et al on ‘Social Bots and the Spread of Disinformation in Social Media’ and

Vaccari

and Chadwick on ‘Deepfakes and Disinformation’), there is clearly reason to

be concerned about the use of generative AI to promote disinformation and deep

fakes. In an era where new technologies dominate, voluntary guidelines are not

enough. Stronger measures are urgently needed to balance free speech and to

have control over AI systems. It is admitted that while AI can be an excellent

tool for journalists, it can also be used for bad purposes.

INEQUALITY BETWEEN MEDIA

PROVIDERS: THE ATTRIBUTION OF A SPECIAL STATUS

In terms of platforms and media

companies negotiating content, since not all media providers (media companies

negotiating content) will receive a special status, it creates inequality.

Platforms will have to guarantee that most of the reported information is

publicly accessible. The main privilege resulting from this special status is

that VLOP providers are more restricted in the way they moderate the content,

but not in the sense of a ban on acting against this content but rather in the

form of advanced transparency and information towards the information provider concerned.

This effectively leads to an uncertain negotiation situation in which

influential media and platforms negotiate over what content remains visible.

This is especially true since the media have financial interests in seeking a

rapid means of communication and in ensuring that their content remains visible

even if it is at the expense of small providers.

CONCLUSION

As a conclusion, the risk to tamper

with public opinion by disguising disinformation and propaganda as legitimate

media content is still reflected in Article 18’s self-proclamation mechanism.

In top of that, the risk of establishing two categories of freedom of speech

arises from the fragmentation of legislation, not aligning with the DSA. Then,

our capacity to create informed decisions could be undermined by Article 18

EMFA, an article that allows self-proclaimed media entities to operate with insufficient

oversight. Furthermore, our democratic processes risk to be severely damaged by

the unregulated spread of disinformation. Finally, the opacity of Article 18 in

the determination of the authenticity of self-proclaimed media engenders

problems of compliance enforcement.

The elements recalled here

highlight the underside of the new legislation and corroborates that efforts

must be made in the future to remedy the critical situation of press freedom

within the EU.